|

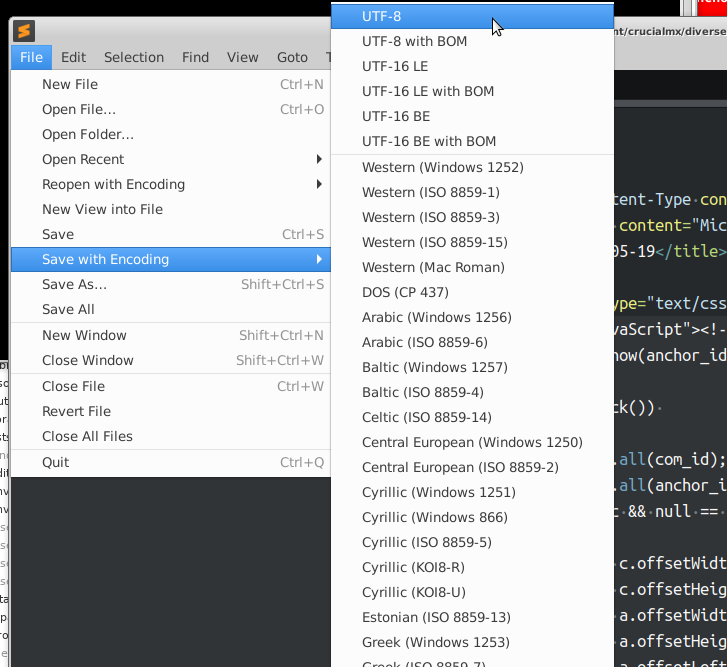

Three bytes are needed for the remaining 61,440 codepoints of the Basic Multilingual Plane (BMP), including most Chinese, Japanese and Korean characters. The next 1,920 code points need two bytes to encode, which covers the remainder of almost all Latin-script alphabets, and also IPA extensions, Greek, Cyrillic, Coptic, Armenian, Hebrew, Arabic, Syriac, Thaana and N'Ko alphabets, as well as Combining Diacritical Marks. The first 128 code points (ASCII) need 1 byte. In the following table, the x characters are replaced by the bits of the code point: UTF-8 encodes code points in one to four bytes, depending on the value of the code point. In Japan especially, UTF-8 encoding without a BOM is sometimes called UTF-8N. UTF-8-BOM and UTF-8-NOBOM are sometimes used for text files which contain or do not contain a byte-order mark (BOM), respectively. See also CESU-8 for an almost synonym with UTF-8 that rarely should be used. In Oracle Database (since version 9.0), A元2UTF8 means UTF-8. In HP PCL, the Symbol-ID for UTF-8 is 18N. In MySQL, UTF-8 is called utf8mb4 (with utf8mb3, and its alias utf8, being a subset encoding for characters in the Basic Multilingual Plane ). In Windows, UTF-8 is codepage 65001 (i.e. The official Internet Assigned Numbers Authority also lists csUTF8 as the only alias, which is rarely used. web standards (which include CSS, HTML, XML, and HTTP headers) explicitly allow utf8 (and disallow "unicode") and many aliases for encodings. Some other spellings may also be accepted by standards, e.g. Most standards officially list it in upper case as well, but all that do are also case-insensitive and utf-8 is often used in code. The official name for the encoding is UTF-8, the spelling used in all Unicode Consortium documents. Virtually all countries and languages have 95% or more use of UTF-8 encodings on the web. UTF-8 is the dominant encoding for the World Wide Web (and internet technologies), accounting for 98.2% of all web pages, 99.0% of the top 10,000 pages, and up to 100% for many languages, as of 2024. UTF-8 results in fewer internationalization issues than any alternative text encoding, and it has been implemented in all modern operating systems, including Microsoft Windows, and standards such as JSON, where, as is increasingly the case, it is the only allowed form of Unicode. This led to its adoption by X/Open as its specification for FSS-UTF, which would first be officially presented at USENIX in January 1993 and subsequently adopted by the Internet Engineering Task Force (IETF) in RFC 2277 ( BCP 18) for future internet standards work, replacing Single Byte Character Sets such as Latin-1 in older RFCs.

Ken Thompson and Rob Pike produced the first implementation for the Plan 9 operating system in September 1992. UTF-8 was designed as a superior alternative to UTF-1, a proposed variable-length encoding with partial ASCII compatibility which lacked some features including self-synchronization and fully ASCII-compatible handling of characters such as slashes. It was designed for backward compatibility with ASCII: the first 128 characters of Unicode, which correspond one-to-one with ASCII, are encoded using a single byte with the same binary value as ASCII, so that valid ASCII text is valid UTF-8-encoded Unicode as well. Code points with lower numerical values, which tend to occur more frequently, are encoded using fewer bytes. UTF-8 is capable of encoding all 1,112,064 valid Unicode code points using one to four one- byte (8-bit) code units. Defined by the Unicode Standard, the name is derived from Unicode Transformation Format – 8-bit. UTF-8 is a variable-length character encoding standard used for electronic communication.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed